Agentic AI vs Generative AI: Which is Better for Enterprise Automation?

The primary difference between agentic AI and generative AI lies in autonomy and execution. Generative AI synthesizes content, code, or data based on direct user prompts, whereas agentic AI operates autonomously, reasoning through multi-step goals, interacting with external APIs, and executing complex workflows without continuous human intervention.

Introduction to the Modern AI Landscape

The rapid evolution of artificial intelligence has pushed enterprise software beyond simple statistical forecasting and into the realm of complex cognitive processing. For software engineers and enterprise architects, understanding the distinction between generating data and executing actions is critical when designing automated systems. Historically, automation relied on rigid, rules-based scripts (such as Robotic Process Automation or RPA). The introduction of large language models (LLMs) shifted this paradigm, introducing non-deterministic, probabilistic text generation.

However, generating text does not intrinsically automate an enterprise workflow. An LLM cannot inherently update a database schema, rollback a failed Kubernetes deployment, or autonomously negotiate a vendor contract—it can only generate the text or code required to do so. This limitation has birthed a new architectural paradigm: Agentic AI. By wrapping generative models in execution loops with access to environmental tools and memory states, engineering teams are transitioning from static generation to dynamic, autonomous agents. Understanding the architectural differences between generative AI and agentic AI is essential for determining the right tech stack for enterprise automation.

What is Generative AI?

Generative AI refers to a class of machine learning models designed to synthesize new data—such as text, images, code, or audio—that mirrors the statistical properties of its training data. At its core, generative AI maps an input sequence (the prompt) to an output sequence using complex probability distributions. In the context of enterprise software, this almost exclusively refers to Transformer-based Large Language Models (LLMs) and diffusion models.

While highly sophisticated, foundational generative AI models are inherently reactive and stateless. They wait for an input trigger, compute the most probable subsequent sequence of tokens, return the result, and terminate the process. They do not possess inherent goal-seeking behaviors, nor do they independently initiate actions outside of the inference lifecycle.

Core Architecture and Mechanisms

The underlying architecture of most modern text-based generative AI relies on the Transformer network, specifically utilizing self-attention mechanisms. Instead of processing text sequentially, attention mechanisms allow the model to weigh the contextual importance of all tokens in an input sequence simultaneously.

Mathematically, a generative text model performs next-token prediction. Given a sequence of tokens X = (x1, x2, …, xn), the model calculates the probability distribution for the next token, P(xn+1 | x1, x2, …, xn). This calculation is purely mathematical and probabilistic. The model has no fundamental “understanding” of the text; it is optimizing for the highest probability output based on its learned weights. Without an external framework, the execution loop ends the moment the final token (often an or End-Of-Sequence token) is generated.

Key Features of Generative AI

- Pattern Synthesis: Generative models excel at recognizing patterns in massive datasets and replicating those patterns to create highly accurate, contextually relevant outputs.

- Zero-Shot and Few-Shot Inference: Modern models can perform tasks they were not explicitly trained on by leveraging the vast general knowledge embedded in their neural weights.

- Stateless Execution: By default, generative models do not remember past interactions. Every API call to a generative model is an isolated event requiring the full context to be passed in the prompt.

- Non-Deterministic Output: Unlike traditional functions where a specific input always yields the exact same output, generative models sample from a probability distribution, meaning the same prompt can yield different outputs (controlled by hyperparameters like temperature and top-p).

Common Enterprise Use Cases

Generative AI is highly effective for tasks that require data transformation, summarization, or synthesis. In enterprise environments, standard implementations include:

- Code Scaffolding and Boilerplate Generation: Assisting developers by generating structural code, unit tests, or documentation based on inline comments.

- Unstructured Data Extraction: Parsing raw, unstructured documents (like PDFs or emails) and converting them into structured JSON formats for downstream processing.

- Knowledge Base Retrieval (RAG): Enhancing search capabilities by summarizing retrieved documents from an internal corpus, providing direct answers rather than a list of hyperlinks.

Limitations in Automation

Despite its power, generative AI falls short in true enterprise automation. Because it is stateless and reactive, it requires a “Human-in-the-Loop” (HitL) to validate outputs and manually execute the subsequent steps in a workflow. Furthermore, generative models suffer from “hallucinations”—confidently asserting incorrect information. If a generative model outputs an incorrect API payload, it lacks the innate ability to read the resulting HTTP 400 error, debug its own payload, and retry the request. It simply generates the text and stops.

What is Agentic AI?

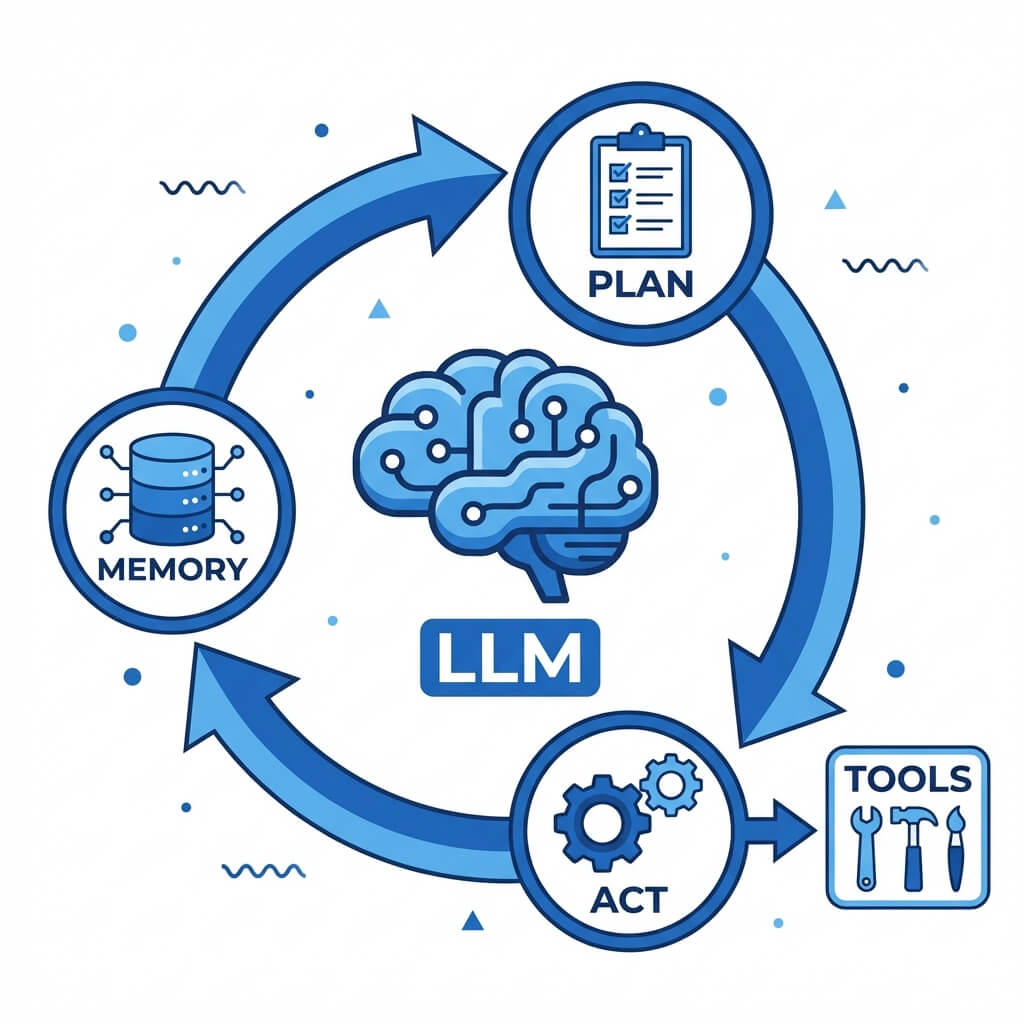

Agentic AI represents a systemic architectural layer built on top of generative models. If generative AI is the “brain” capable of linguistic reasoning and data synthesis, Agentic AI provides the “hands” (tool use), “memory” (statefulness), and “executive function” (planning and autonomy) required to act upon an environment. Agentic systems do not merely answer questions; they are given high-level objectives and are expected to autonomously plan, execute, and course-correct until the objective is achieved.

Designing agentic AI involves wrapping an LLM in an orchestration loop—often utilizing frameworks like LangChain, LlamaIndex, or AutoGPT. The system evaluates its current state, formulates a plan, triggers external APIs, observes the result, and iterates. This shift from simple prompt-response mechanisms to iterative reasoning loops is what enables true enterprise automation.

Core Architecture: Moving Beyond Generation to Action

The architecture of an AI agent is significantly more complex than a standard LLM inference pipeline. A robust agentic system typically consists of four core components:

- The Reasoning Engine: A highly capable LLM acts as the central processor. It is responsible for parsing goals, analyzing data, and deciding which actions to take.

- Memory Management: Agents require both short-term memory (in-context learning via the prompt window) and long-term memory (typically implemented using Vector Databases like Pinecone, Milvus, or pgvector). This allows the agent to recall past actions, user preferences, and historical API responses.

- Tooling and Actuators: Agents are equipped with executable functions. These can range from simple web search tools to complex, authenticated enterprise API clients (e.g., executing SQL queries, managing AWS infrastructure, or creating Jira tickets).

- The Execution Loop: Agents utilize cognitive architectures such as ReAct (Reasoning and Acting). The loop follows a strict sequence: Observation -> Thought -> Action. The agent observes the environment, thinks about the next logical step, executes an action using a tool, observes the new state, and repeats until the termination criteria are met.

Key Features of AI Agents

- Goal-Oriented Autonomy: Instead of providing step-by-step instructions, users provide an end-state goal (e.g., “Audit the current AWS environment for exposed S3 buckets and generate a Jira ticket for each”). The agent handles the intermediate steps.

- Self-Reflection and Error Correction: If an agent attempts to execute an SQL query and receives a syntax error, it can feed that error back into its reasoning engine, rewrite the query, and try again autonomously.

- Multi-Agent Orchestration: Complex enterprise workflows often deploy specialized agents (e.g., a “Researcher Agent,” a “Coder Agent,” and a “QA Agent”) that interact with one another to complete massive organizational tasks.

Common Enterprise Use Cases

Agentic AI shines in environments where workflows are dynamic and involve interacting with multiple distinct systems:

- Autonomous CI/CD Remediation: When a build pipeline fails, an agent can autonomously read the stack trace, parse the relevant repository code, write a patch, test it in an isolated container, and submit a pull request.

- Dynamic Data Engineering: Agents can monitor incoming data streams, autonomously deduce schema changes, and rewrite ETL pipelines on the fly to prevent data ingestion failures.

- Intelligent Customer Resolution: Moving beyond static chatbots, agentic customer support can authenticate a user, query shipping APIs, issue refunds via a payment gateway, and update CRM records without human intervention.

Challenges in Deployment

Deploying Agentic AI introduces significant engineering hurdles. Because agents operate in iterative loops, they consume vastly more tokens than standard generative AI, leading to high inference costs. Furthermore, granting an autonomous system write-access to enterprise APIs introduces severe security and compliance risks. Infinite loops—where an agent repeatedly fails a task but continues to retry—can result in massive API billing spikes and system degradation.

Agentic AI vs Generative AI: Key Differences

To successfully architect an enterprise system, engineering teams must clearly delineate between the capabilities of a standalone generative model and a comprehensive agentic workflow. The distinction is not merely semantic; it dictates the infrastructure, the security protocols, and the expected return on investment.

Generative AI is a component—a primitive—upon which Agentic AI is built. Comparing them directly is akin to comparing a high-performance engine to an autonomous vehicle. The engine (Generative AI) provides the raw processing power and intelligence, but the vehicle (Agentic AI) encompasses the sensors, the chassis, the steering, and the navigation logic required to reach a destination.

Goal Orientation vs. Task Execution

With generative AI, the user must act as the orchestrator. If the objective is to write a weekly report based on Jira tickets, the user must manually export the Jira data, paste it into the LLM context window, prompt the model to summarize, and then manually paste the output into an email client. The LLM simply executed a narrow, isolated task: summarization.

Agentic AI operates on goal orientation. The prompt is shifted from a task directive to a goal directive. The user instructs the agent to “Send a summary of all high-priority bugs closed this week to the engineering team.” The agent must decompose this goal into a sub-task graph:

- Authenticate with the Jira API.

- Construct and execute the JQL query.

- Parse the JSON response.

- Synthesize the text (utilizing the generative engine).

- Authenticate with the SMTP server.

- Send the email.

Statefulness and Memory Management

A standalone generative model has no memory of a prompt sent to it five minutes ago unless that history is manually appended to the new prompt. This statelessness is highly efficient for serving millions of independent queries but detrimental to complex workflows.

Agentic AI systems implement persistent memory layers. Short-term memory is often handled through sliding-window context buffers, while long-term memory utilizes semantic search over vector embeddings. This allows an agent working on a multi-day software migration to “remember” constraints discovered on day one and apply them to refactoring tasks on day three.

Tool Integration and API Interaction

The most distinct technical boundary between the two paradigms is tool use (often referred to as function calling). Generative models output strings of text. If a generative model outputs text that looks like a Python script, it is still just text on a screen.

Agentic AI systems intercept the generative output, recognize it as an executable command, and route it to an execution environment (like a secure Docker container or an API gateway). When the function executes, the agent captures the standard output (stdout) or standard error (stderr) and feeds it back into the LLM as a new observation. This bi-directional communication with external environments is the defining characteristic of an agent.

Which is Better for Enterprise Automation?

When addressing the core question—which is better for enterprise automation?—the answer unequivocally points to Agentic AI. However, this is a nuanced conclusion. Generative AI is not obsolete; it is simply better suited for a different category of enterprise optimization known as human augmentation, whereas Agentic AI is designed for true automation.

Deploying the correct architecture depends entirely on the required level of human involvement, the complexity of the workflow, and the organization’s risk tolerance.

When to Deploy Generative AI

Generative AI should be the architecture of choice when the output requires deep human oversight, creativity, or strategic judgment before action is taken.

- Drafting and Ideation: Marketing copy, technical documentation, and strategic memos. A human must review the generated text for brand tone and accuracy.

- Code Assist (Copilots): Tools like GitHub Copilot are generative AI tools. They suggest code snippets directly in the IDE, but the developer must explicitly accept the suggestion and commit the code. This prevents the AI from breaking the build autonomously.

- Data Translation and Formatting: Converting legacy COBOL syntax to Java, or translating a complex SQL query into plain English for business analysts.

In these scenarios, the overhead of building an autonomous agent is unnecessary. The goal is simply to accelerate human workflows, not replace them.

When to Deploy Agentic AI

Agentic AI is the superior choice for high-volume, multi-step operations that are largely deterministic but require dynamic routing or contextual reasoning that traditional RPA cannot handle.

- IT Service Management (ITSM): Automating Level-1 and Level-2 support tickets. An agent can read a password reset request, verify identity via an HR database API, trigger an Active Directory script, and email the user the temporary password, closing the ticket without human intervention.

- Continuous Security Auditing: Agents can continuously poll cloud environments, evaluate configurations against compliance frameworks (like SOC2 or HIPAA), and autonomously execute remediation scripts to close security loopholes as soon as they appear.

- Complex Data Pipelines: If an upstream data provider unexpectedly changes their JSON payload structure, a traditional static ETL pipeline will fail. An agentic pipeline can recognize the failure, analyze the new schema, rewrite the parsing logic, and backfill the missed data.

Architectural Synergy: Combining Both Paradigms

It is crucial for enterprise architects to understand that you cannot have Agentic AI without Generative AI. The most sophisticated enterprise solutions utilize architectural synergy.

For instance, in an automated customer support workflow, an Agentic framework handles the routing, API calls to the billing system, and database lookups. Once the agent has compiled the necessary data (e.g., user account status, refund eligibility), it passes that structured data to a Generative AI module. The generative module is tasked solely with synthesizing a polite, grammatically correct email summarizing the actions taken. The agent then takes that generated text and sends it via an email API.

By compartmentalizing the logic—using agents for execution and generative models for synthesis—enterprises can build highly resilient, scalable, and automated systems.

Frequently Asked Questions (FAQ)

Is Agentic AI just Generative AI combined with APIs?

At a high level, yes, but this simplification ignores the complex cognitive architectures required to make it work. Agentic AI requires sophisticated orchestration loops (like ReAct or Plan-and-Solve), state management, memory retrieval systems, and robust error-handling logic. Simply hard-coding an LLM to trigger a webhook does not make it an agent; the system must possess the autonomy to plan and adapt to the API’s response.

How do you evaluate the performance of Agentic AI systems?

Evaluating generative AI typically involves linguistic metrics (like ROUGE or BLEU scores) or human-in-the-loop subjective scoring. Agentic AI, however, is evaluated on task completion rates and efficiency. Key metrics include the success rate of achieving the end goal, the number of steps (or API calls) taken to reach the goal, the token consumption per task, and the ability to successfully recover from unexpected environmental errors.

What are the security implications of deploying Agentic AI?

Because agents have autonomous access to execute code and interact with APIs, they introduce massive security attack vectors, notably Prompt Injection and Confused Deputy attacks. If a malicious user injects a prompt that tricks the agent into thinking its goal is to drop a database table, an improperly secured agent will execute the SQL command. Security requires implementing strict “Principle of Least Privilege” access, executing agent-generated code in ephemeral, isolated sandboxes, and utilizing human-in-the-loop approval gates for destructive actions (like deleting data or authorizing payments).

Can I build an AI Agent using open-source models?

Yes. While proprietary models like GPT-4 or Claude 3 are highly adept at function calling and reasoning, open-source models like Llama 3 or Mixtral are increasingly capable of powering agentic workflows. However, smaller open-source models may struggle with complex, multi-step reasoning and require fine-tuning specifically for function calling (using frameworks like Gorilla or specialized tool-calling datasets) to match the reliability of enterprise-grade commercial models.