What is a Learning Agent in AI? Components & Uses

A learning agent in AI is an artificial intelligence entity capable of acquiring new knowledge, adapting to novel environments, and improving its performance over time through experience. Unlike static rule-based systems, learning agents autonomously update their internal models to resolve unknown scenarios efficiently.

Introduction to Learning Agents

Historically, artificial intelligence systems relied heavily on hardcoded rules and heuristic programming. These traditional models, such as simple reflex agents or basic goal-based agents, operate effectively within fully observable, deterministic environments where every possible state and optimal action can be anticipated by the programmer. However, when deployed in complex, dynamic, or partially observable environments, these rigid architectures fail. This limitation necessitates a paradigm shift toward systems capable of autonomous adaptation: the learning agent.

A learning agent in AI solves the problem of unforeseen environmental states by separating the execution of a task from the improvement of the task’s performance. Rather than requiring human engineers to manually update its knowledge base or decision-making algorithms whenever the environment changes, a learning agent relies on algorithmic feedback loops to adjust its internal parameters. By leveraging continuous feedback streams, mathematical optimization techniques, and probabilistic models, learning agents can formulate new strategies, discover hidden patterns in data, and optimize their utility functions without explicit human intervention.

Understanding the underlying architecture, mathematical principles, and practical implementations of learning agents is fundamental for engineers building modern, resilient AI systems.

How Does a Learning Agent Work in Artificial Intelligence?

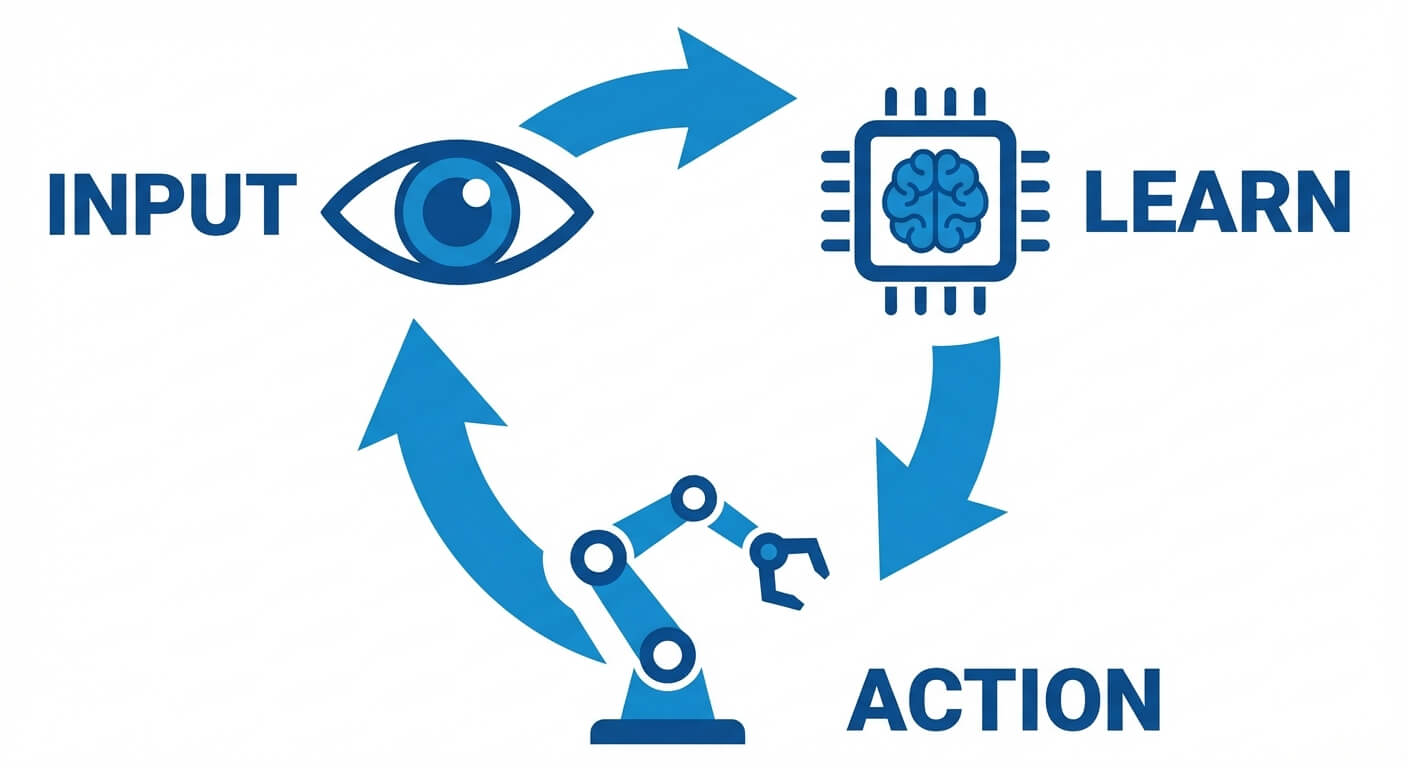

At a fundamental level, an artificial intelligence agent interacts with an environment through sensors (which perceive the state of the environment) and effectors or actuators (which execute actions that alter the state of the environment). In a non-learning agent, the mapping between percepts and actions is fixed by the initial programming. In a learning agent, this mapping is dynamic and continuously optimized.

The learning process is an iterative cycle of perception, action, evaluation, and adaptation. When a learning agent perceives a new state, it selects an action based on its current, potentially suboptimal, knowledge. Once the action is executed, the environment transitions to a new state and returns a signal—often in the form of a reward, penalty, or simple state observation.

The learning agent processes this feedback by comparing the actual outcome of its action against its expected outcome. If a discrepancy (error) exists, the agent utilizes optimization algorithms to adjust the weights, parameters, or logical rules governing its decision-making logic. Over multiple iterations, this error converges toward a minimum, resulting in an agent that makes increasingly optimal decisions.

Core Components of a Learning Agent

The architecture of a learning agent is modular, typically divided into four primary components. This standardized conceptual framework, originally formalized in classical AI literature by Stuart Russell and Peter Norvig, ensures that the agent can both execute its current tasks and safely experiment to improve future performance.

1. The Performance Element

The performance element is the execution engine of the AI agent. It is responsible for selecting the external actions the agent will take based on the current percepts from the environment. In traditional, non-learning agents, this component constitutes the entirety of the agent.

In a learning context, the performance element utilizes a continually updating model—such as a neural network, a decision tree, or a Markov Decision Process (MDP) policy—to map the state space to the action space. For example, if the performance element is parameterized by a vector of weights W, it computes an output vector (action) via a function f(X; W) where X represents the input state. As the learning process progresses, the parameter W is modified by the learning element, thus altering how the performance element behaves in the future.

2. The Critic

The critic serves as the internal evaluation mechanism. While the environment provides raw feedback (which can sometimes be sparse or delayed), the critic translates this external feedback into a structured evaluation metric based on a fixed performance standard.

The performance standard is an immutable objective provided by the system designers, representing what the agent is ultimately trying to achieve (e.g., “maximize trading profit” or “minimize navigation time”). The critic measures the gap between the performance element’s current behavior and this objective standard. It then generates a feedback signal indicating whether the agent’s recent action was beneficial or detrimental. Without the critic, the learning element would have no quantifiable metric to determine the direction or magnitude of its necessary updates.

3. The Learning Element

The learning element is the mathematical core responsible for the actual “learning” or adaptation. It receives the evaluated feedback signal from the critic and determines how the performance element should be modified to improve future utility.

This component employs optimization algorithms to update the agent’s internal models. If the agent uses a parameterized function (like a deep neural network), the learning element calculates the gradient of the loss or error and updates the weights. A common mathematical representation of this update process is gradient descent, expressed as:

W = W – α ∇J(W)

Here, W represents the model weights, α is the learning rate, and ∇J(W) is the gradient of the cost function with respect to the weights. By systematically applying these updates, the learning element ensures that the performance element becomes progressively more accurate over time.

4. The Problem Generator

A major challenge in artificial intelligence is the risk of an agent converging on a local optimum. If an agent only executes actions that it currently believes to be the best (pure exploitation), it may never discover novel, highly rewarding actions (exploration).

The problem generator forces the performance element to take suboptimal or entirely new actions to explore the environment. By injecting calculated stochasticity or specific exploratory heuristics into the decision-making process, the problem generator ensures the agent discovers the full potential of its state-action space. Common techniques implemented by the problem generator include ε-greedy strategies (where the agent chooses a random action with a probability of ε) or Upper Confidence Bound (UCB) algorithms.

Types of Learning Used by AI Agents

Learning agents do not rely on a single, monolithic approach to acquire knowledge. Depending on the environment’s structure, the availability of data, and the nature of the feedback, a learning agent in AI may utilize several distinct machine learning paradigms.

Supervised Learning

In supervised learning, the agent learns from a fully labeled dataset provided by an external “teacher.” The agent receives input-output pairs and must learn to map the inputs to the correct outputs by minimizing a predefined loss function.

For example, a learning agent designed for image classification receives thousands of images, each tagged with a label. The learning element adjusts its internal parameters to minimize the Mean Squared Error (MSE) or Cross-Entropy Loss. A standard calculation for MSE over N samples is:

MSE = (1 / N) Σ (yi – ŷi)²

Where yi is the true label and ŷi is the agent’s predicted label. While highly effective for pattern recognition, supervised learning is limited in autonomous agentic environments because it requires exhaustive human labeling and cannot dynamically discover new solutions outside its training distribution.

Unsupervised Learning

Unsupervised learning requires the agent to find hidden structures, distributions, or patterns within raw, unlabeled data. The agent is not given explicit instructions on what to look for; instead, it utilizes mathematical techniques to cluster data points, reduce dimensionality, or learn generative models.

For an AI agent mapping an unknown terrain, unsupervised learning allows it to categorize different types of obstacles or surfaces without prior labeling. Common algorithms include K-Means clustering and Principal Component Analysis (PCA). Unsupervised learning serves as a powerful perception-enhancement tool, often feeding structured representations of the environment into the agent’s performance element.

Reinforcement Learning (RL)

Reinforcement Learning is the paradigm most closely associated with learning agents. In RL, there is no labeled dataset. Instead, the agent learns purely through interaction with the environment, receiving numerical reward signals based on its actions. The objective of an RL agent is to learn a policy (π) that maximizes the expected cumulative reward over time.

This problem is typically framed as a Markov Decision Process (MDP), defined by a set of states (S), a set of actions (A), transition probabilities (P), and a reward function (R). A fundamental algorithm used by RL learning agents is Q-learning, which updates the expected utility (Q-value) of taking a specific action in a specific state. The Bellman equation dictates the Q-value update rule:

Q(s, a) = Q(s, a) + α [r + γ max Q(s’, a’) – Q(s, a)]

Where:

- s = current state

- a = current action

- α = learning rate

- r = immediate reward received

- γ = discount factor (valuing immediate vs future rewards)

- s’ = the next state

- a’ = all possible actions in the next state

By continuously applying this update via the learning element, the agent systematically maps the entire environment, learning complex behaviors without explicit human supervision.

Meta-Learning (Learning to Learn)

Advanced AI agents utilize meta-learning, wherein the agent optimizes its own learning process. Instead of merely learning a single task, a meta-learning agent is trained across a wide distribution of tasks, allowing it to rapidly adapt to a new, unseen task with minimal data. This is particularly relevant in few-shot learning scenarios and modern Large Language Models (LLM) agents, where the agent modifies its internal context handling based on user prompts dynamically.

Learning Agents vs. Traditional AI Agents

To truly grasp the architectural significance of a learning agent, it is crucial to compare it against traditional, non-learning counterparts in the AI domain. The following table contrasts various agent types based on their decision basis, adaptability, and required environment knowledge.

| Agent Type | Decision Basis | Adaptability | Knowledge of Environment |

|---|---|---|---|

| Simple Reflex Agent | Current percepts only (Condition-Action rules). | None. Fails if the environment changes or rules are incomplete. | Requires fully observable environment to function correctly. |

| Model-Based Reflex Agent | Current percepts + Internal state (tracking past history). | Low. Can handle unobserved aspects but cannot learn new rules. | Can operate in partially observable environments based on hardcoded models. |

| Goal-Based Agent | Internal state + Goal information (search and planning). | Moderate. Can find new paths to a goal but does not improve its core search algorithms. | Requires a known model of the environment’s physics and transition logic. |

| Utility-Based Agent | Maximizing a predefined utility function (efficiency/happiness). | Moderate. Evaluates the “quality” of a state but relies on fixed utility metrics. | Requires comprehensive knowledge to calculate expected utility values. |

| Learning Agent | Dynamic models updated via feedback, rewards, and the Critic component. | Very High. Modifies its internal rules, heuristics, and models over time. | Can operate in completely unknown, dynamic, and partially observable environments. |

Real-World Applications and Uses

The theoretical framework of learning agents translates into powerful, real-world technologies that drive modern industry, software engineering, and scientific research.

Autonomous Vehicles

Self-driving cars are complex ensembles of learning agents. They operate in highly non-stationary, continuous environments where hardcoding every potential traffic scenario is impossible. By utilizing reinforcement learning and deep supervised learning, these agents continuously update their models. The performance element controls the steering and acceleration, while the learning element processes data from LiDAR and cameras to refine the agent’s predictive models for pedestrian behavior and vehicle trajectories.

Algorithmic Trading Systems

In the financial sector, market conditions are chaotic and strictly non-deterministic. Traditional rule-based trading bots quickly become obsolete as market regimes shift. Learning agents driven by Deep Reinforcement Learning act as quantitative traders, adjusting their policies based on raw market data. The agent treats portfolio value as the reward signal, using the problem generator to occasionally test novel trading strategies to discover new market inefficiencies.

Robotics and Industrial Automation

Modern robotics relies on learning agents for tasks like grasping unknown objects or navigating warehouse floors. A major technique in this space is “Sim2Real” (Simulation to Reality) transfer. The learning agent is initially deployed in a physics simulation where it can rapidly train millions of iterations via reinforcement learning. Once deployed into a physical robot, the learning agent continues to adapt to real-world friction, sensor noise, and hardware degradation that could not be perfectly simulated.

Generative AI and LLM Agents

With the rise of Large Language Models, autonomous software agents (e.g., AutoGPT, LangChain architectures) have emerged. These systems act as learning agents by using the LLM as the core performance element. By storing past interactions in a vector database (memory) and utilizing reflection (acting as a critic), these agents can write code, test it, read the compiler error (feedback), and adjust their subsequent code generation autonomously.

Personalized Recommendation Engines

Platforms like Netflix, Amazon, and YouTube employ learning agents to optimize user engagement. The agent treats the user as the environment. Recommending a video is the action, and the user’s watch time or click-through rate serves as the reward signal. The learning element continuously refines user embeddings, enabling the agent to predict and adapt to shifting user preferences over time.

Challenges in Designing a Learning Agent

Despite their immense capability, engineering a stable, efficient learning agent presents significant algorithmic and computational challenges.

1. The Exploration vs. Exploitation Dilemma

Balancing the problem generator is notoriously difficult. If an agent explores too much, it wastes compute resources and performs suboptimally by constantly trying random actions. If it exploits its current knowledge too heavily, it gets trapped in local optima, missing out on more rewarding strategies. Designing dynamic decay rates for exploration (e.g., gradually reducing the ε in ε-greedy algorithms) requires meticulous tuning.

2. Sample Efficiency and Computational Cost

Deep reinforcement learning agents are exceptionally data-hungry. While a human might learn to play a video game or drive a car in a few hours, a learning agent often requires millions of iterations to converge on a stable policy. This lack of sample efficiency necessitates massive computational power (clusters of GPUs/TPUs), which increases training costs and environmental impact.

3. Reward Shaping and Alignment

In reinforcement learning, the agent optimizes purely for the reward signal defined by the critic. If the performance standard is poorly defined, the agent will find loopholes. This phenomenon, known as reward hacking or misalignment, occurs when an agent achieves the literal objective in an unintended, often destructive manner. Designing dense, accurate, and safe reward functions (Reward Shaping) remains an open research problem in AI safety.

4. Catastrophic Forgetting

When a learning agent transitions to a drastically new environment, the learning element updates the performance element’s weights to handle the new data. In continuous learning scenarios using neural networks, this can cause the agent to overwrite the weights that encoded its previous knowledge—a phenomenon called catastrophic forgetting. Mitigating this requires complex architectural designs like experience replay buffers or elastic weight consolidation.

5. Operating in Partially Observable Markov Decision Processes (POMDPs)

In reality, sensors are noisy, and environments are rarely fully observable. The agent must maintain a probabilistic belief state about the environment rather than absolute certainty. Designing learning agents that can effectively optimize policies under high degrees of uncertainty requires complex Bayesian inference and recurrent neural network architectures.

Frequently Asked Questions (FAQs)

What is the difference between machine learning and a learning agent?

Machine learning is a broad set of statistical techniques used to find patterns in data. A learning agent is a distinct AI architecture that utilizes machine learning algorithms within a closed-loop system to interact dynamically with an environment, perceive feedback, and autonomously update its decision-making logic.

Can a learning agent operate in a partially observable environment?

Yes. Advanced learning agents handle partially observable environments by maintaining an internal memory or belief state. By utilizing sequence models (like LSTMs or Transformers) or tracking transition probabilities, the agent infers hidden environmental states based on historical percepts before executing an action.

What is the role of the Critic in an AI learning agent?

The critic evaluates the agent’s current actions against a fixed, objective performance standard. It interprets the raw feedback or environmental changes and generates a structured reward or penalty signal, informing the learning element exactly how well the performance element is currently operating.

Why is the problem generator necessary?

Without a problem generator, an agent would only execute actions it already knows yield a positive outcome. The problem generator introduces necessary exploration by forcing the agent to attempt new actions, preventing the system from becoming stuck in local, suboptimal routines and allowing it to discover superior strategies.