Understanding the Production System in AI

A production system in AI is a cognitive architecture framework used to build rule-based expert systems. It consists of a global database, a set of IF-THEN production rules, and a control system that executes these rules to solve complex computational problems and simulate human reasoning.

What is a Production System in AI?

A production system in Artificial Intelligence (AI) represents one of the earliest and most foundational cognitive architectures designed to model human problem-solving capabilities. Pioneered by researchers like Allen Newell and Herbert A. Simon, production systems serve as the underlying mechanism for expert systems—software designed to emulate the decision-making ability of a human expert. Unlike traditional imperative programming paradigms, where the control flow is explicitly defined by a sequence of instructions (e.g., loops and conditionals embedded within business logic), a production system strictly separates the domain knowledge from the control mechanism that evaluates it.

This architecture allows developers to define a vast repository of independent logical rules without needing to script the exact path the program must take to arrive at a conclusion. The system evaluates the current state of its environment, autonomously identifies which rules are applicable, and executes them until a terminal goal state is reached.

Key Components of a Production System

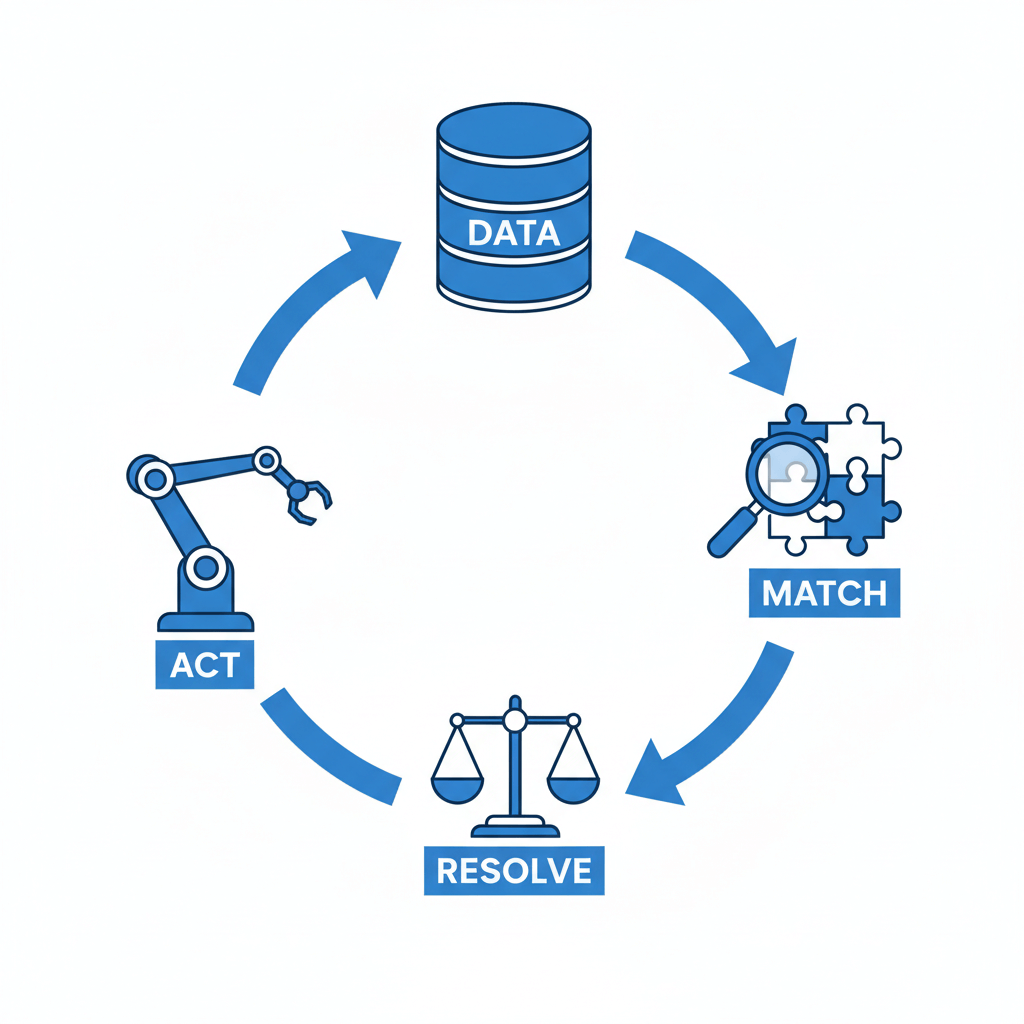

To function effectively, a production system relies on three interconnected core components. A failure or inefficiency in any of these components directly impacts the system’s ability to deduce accurate outcomes.

- Global Database (Working Memory): This is the dynamic storage component of the production system. It acts as the central repository for all current states, facts, and assertions known to the system at any given moment. The global database is continuously updated; as rules execute, they modify the state of this working memory by adding, updating, or deleting facts.

- Set of Production Rules (Knowledge Base): The knowledge base contains the domain-specific logic, formatted strictly as

IF <condition> THEN <action>statements. TheIFportion (the antecedent) specifies the conditions that must be met within the global database, while theTHENportion (the consequent) dictates the action to be taken, which typically involves altering the global database or interacting with an external environment. - Control System (Inference Engine): The inference engine is the execution brain of the production system. It dictates how rules are applied to the working memory. The control system continuously loops through a specific execution pipeline—matching rules against the database, resolving conflicts when multiple rules apply simultaneously, and executing the chosen rule to update the system state.

Core Characteristics of Production Systems

Understanding the characteristics of production systems is critical for software engineers tasked with building scalable rule-based engines. These systems possess unique structural attributes that make them highly suitable for domains requiring complex, logic-based inferences, yet completely unsuitable for tasks requiring pattern recognition or continuous mathematical optimization (where modern neural networks excel).

- Separation of Knowledge and Control: The most defining characteristic is the strict decoupling of the rule base from the inference engine. You can update, add, or remove rules from the knowledge base without ever rewriting the underlying execution algorithm.

- Modularity: Because rules are independent encapsulated units of logic, the system is highly modular. A single rule does not explicitly call another rule. Instead, rules interact indirectly by modifying the shared global database.

- Data-Driven Execution: The execution flow is not predetermined. It is highly reactive, dictated entirely by the data currently residing in the working memory. If the data changes, the execution path naturally shifts in response.

- Uniformity: All knowledge is represented in an identical, standardized format (the production rule). This uniformity makes it easier for both engineers and domain experts (who may not be programmers) to audit and validate the system’s logic.

- Traceability: Production systems offer excellent explainability. Because every state change is the direct result of a specific rule firing, developers can easily generate an execution trace to understand exactly why the AI arrived at a specific conclusion.

Production System Rules and Control Strategies

The operational efficiency of a production system hinges entirely on how its control strategies govern the evaluation of production rules. Because real-world applications often involve thousands of rules and massive working memories, the control strategy cannot afford to be computationally naive. A brute-force iteration over every rule during every cycle would result in catastrophic performance bottlenecks. Therefore, the control system must intelligently navigate the state space, utilizing specific execution cycles and search strategies to reach optimal conclusions rapidly.

The Anatomy of Production Rules

Production rules represent the operational semantics of the system. They evaluate propositions via logical operators (AND, OR, NOT).

- Antecedent (LHS – Left Hand Side): The condition part of the rule. It pattern-matches against the current facts in the global database. For a rule to trigger, the exact state described in the LHS must be true.

- Consequent (RHS – Right Hand Side): The action part of the rule. If the LHS is satisfied, the RHS executes. Actions typically include

ASSERT(adding a new fact),RETRACT(removing an existing fact), orMODIFY(updating a fact).

Control and Search Strategies

The Inference Engine must choose a search strategy to navigate the rule space. These strategies fall into two primary directional paradigms:

- Forward Chaining (Data-Driven): The system starts with the initial facts in the global database and continuously fires rules whose antecedents are satisfied. This process asserts new facts into the database, triggering more rules, and chaining forward until a specific goal state is reached or no further rules can fire. It is highly effective in systems where all initial data is known, such as configuration tasks or monitoring systems.

- Backward Chaining (Goal-Driven): The system starts with a target goal or hypothesis and works in reverse. It searches for rules whose consequent (THEN) matches the goal. It then looks at those rules’ antecedents (IF) and treats them as sub-goals, recursively attempting to prove them using the global database. This is heavily utilized in diagnostic systems (like medical or debugging AI), where the system asks questions to verify a suspected outcome.

Conflict Resolution in AI

During the “Match” phase of the execution cycle, the inference engine may find that multiple rules have their conditions satisfied simultaneously. This set of applicable rules is known as the conflict set. The engine must utilize Conflict Resolution Strategies to determine which single rule to “fire” (execute) next. Common strategies include:

- Specificity: The system prioritizes rules with more specific conditions over general ones. For example, a rule requiring three specific database facts will execute before a rule requiring only one of those facts.

- Recency: The system prioritizes rules that match against the most recently added facts in the working memory, ensuring the AI focuses on the latest developments in its environment.

- Refractoriness: A rule is prevented from firing more than once on the exact same set of data. This is crucial for preventing infinite execution loops.

- Explicit Rule Ordering: Rules are assigned static priority weights or execution indices by the developer. The rule with the highest priority in the conflict set fires first.

Classification of Production Systems in AI

Production systems are rigorously classified based on their mathematical and operational behaviors, specifically concerning how rule execution alters the search space and whether actions are reversible. Understanding these classifications is imperative when designing an AI architecture, as it dictates the complexity of the underlying search algorithms. If a system allows for the retraction of facts, the engine must be capable of backtracking, drastically increasing computational overhead.

Monotonic vs. Non-Monotonic Systems

- Monotonic Production Systems: In a monotonic system, the application of a rule never prevents the subsequent application of another rule that could have fired previously. Once a fact is asserted into the global database, it is permanently true and cannot be retracted. The knowledge base only grows. This classification simplifies the search process significantly, making it suitable for domains like theorem proving.

- Non-Monotonic Production Systems: These systems permit rules to retract or modify existing facts in the working memory. Because previously true facts can become false, rules that were previously applicable may lose their validity. This mimics real-world reasoning (e.g., default logic or belief revision) but requires complex truth maintenance systems to track dependencies between facts.

Commutative vs. Partially Commutative Systems

- Commutative Production Systems: A system is commutative if the order in which rules execute does not affect the final outcome. Any sequence of valid rule firings will eventually lead to the exact same final state.

- Partially Commutative Systems: A system where a set of rules can transform state $A$ to state $B$, and if any rule in that set is applied, the system can still eventually reach state $B$, though the specific path and intermediate states may differ.

Comparison of Production System Classifications

Below is a technical comparison of how these classifications dictate the architectural requirements of the system.

| Classification | Fact Retraction Allowed? | Order Dependency | System Reversibility | Primary Application Domain |

|---|---|---|---|---|

| Monotonic | No (Append-Only) | Irrelevant to validity of old facts | Irreversible (No backtracking needed) | Mathematical Theorem Proving, Static Fact Deduction |

| Non-Monotonic | Yes (Modify/Delete) | Highly Dependent | Requires complex Truth Maintenance | Medical Diagnosis, Real-Time Navigation, Belief Revision |

| Commutative | Typically No | Order does not affect final state | Reversible | Chemical Synthesis Planning (e.g., DENDRAL) |

| Partially Commutative | Yes | Path changes, but goal remains accessible | Partially Reversible | Game AI Search Spaces (e.g., 8-Puzzle, Chess) |

Advantages and Disadvantages of Production Systems

Before integrating a production system into an enterprise architecture, software engineers must carefully weigh the architectural trade-offs. While these systems offer unparalleled explainability and modularity for deterministic logic, they are not a silver bullet. They suffer from specific scaling issues that modern neural architectures bypass, though they excel in areas where deep learning models fail (such as strict regulatory compliance and logical auditing).

Advantages

- High Modularity and Flexibility: New knowledge can be injected into the system simply by writing new rules. Developers do not need to refactor existing business logic or rewrite core application flow.

- Excellent Traceability and Debugging: Because the system executes discrete, logical rules, it is trivial to generate a comprehensive audit trail. An engineer can pinpoint exactly which rule fired at which millisecond to produce a specific outcome, making them highly desirable in medical, legal, and financial sectors.

- Separation of Concerns: By cleanly separating the knowledge base from the inference engine, domain experts (like doctors or lawyers) can define the rules without needing deep software engineering expertise, while developers focus solely on optimizing the C++ or Python execution engine.

- Natural Representation of Knowledge: Human experts naturally express knowledge conditionally (e.g., “If the patient has a fever and a rash, then suspect measles”). Production systems map directly to this cognitive structure.

Disadvantages

- Computational Inefficiency at Scale: The fundamental process of pattern matching the global database against the knowledge base can be NP-hard. Even with advanced optimization algorithms like RETE (which compiles rules into a specialized graph to minimize redundant matching), execution overhead grows significantly as the rule base scales into the tens of thousands.

- Lack of Autonomous Learning: Traditional production systems cannot learn from their mistakes or adapt to new data patterns automatically. Any new logic requires a human operator to explicitly author a new rule. (Though modern hybrid systems integrate machine learning to dynamically generate rules).

- Opaque Rule Interactions (Spaghetti Rules): While individual rules are simple, the interaction of thousands of non-monotonic rules can become incredibly complex to trace. A rule modifying a fact might unintentionally trigger a cascade of unrelated rules, leading to unpredictable system behavior and severe debugging challenges.

Real-World Applications of Production Systems

Production systems form the backbone of numerous enterprise and historical AI applications. Their ability to handle strict, complex rule sets makes them indispensable in domains where precise, explainable logic is required.

- Medical Diagnostic Systems: One of the most famous early examples is MYCIN, developed in the 1970s. MYCIN used backward chaining production rules to identify bacteria causing severe infections and recommended specific antibiotic dosages based on the patient’s body weight.

- Chemical Analysis: DENDRAL utilized commutative production rules to hypothesize the molecular structure of organic compounds based on mass spectrometry data.

- Business Rule Engines (BRE): Modern enterprise applications heavily rely on production system derivatives, such as Drools (a Java-based rule engine) or CLIPS (C Language Integrated Production System). These engines manage complex business logic like dynamic pricing models, fraud detection algorithms in banking, and automated loan approval systems.

- Game AI and NPC Behavior: Video games frequently utilize simplified production systems (often structured as Behavior Trees or Goal-Oriented Action Planning) to dictate Non-Player Character (NPC) actions based on the dynamic state of the game world.

Implementing a Basic Production System

To demonstrate the underlying mechanics of a production system, we can implement a highly simplified, forward-chaining inference engine in Python. This script defines a working memory, a set of production rules, and an engine that continually evaluates and fires rules until no more conditions are met.

class Rule:

def __init__(self, name, conditions, actions):

"""

:param name: String identifier for the rule

:param conditions: List of lambda functions representing the LHS

:param actions: List of lambda functions representing the RHS

"""

self.name = name

self.conditions = conditions

self.actions = actions

self.has_fired = False

class ProductionSystem:

def __init__(self):

self.working_memory = set()

self.rules = []

def add_fact(self, fact):

self.working_memory.add(fact)

def add_rule(self, rule):

self.rules.append(rule)

def run(self):

rule_fired = True

# Forward chaining loop

while rule_fired:

rule_fired = False

for rule in self.rules:

if not rule.has_fired:

# Check if all conditions (LHS) are met in working memory

if all(cond(self.working_memory) for cond in rule.conditions):

print(f"Firing Rule: {rule.name}")

# Execute actions (RHS)

for action in rule.actions:

action(self.working_memory)

# Refractoriness: Prevent rule from firing again

rule.has_fired = True

rule_fired = True

break # Re-evaluate from the top after state change

# --- Implementation Example: Simple Server Monitoring ---

engine = ProductionSystem()

# Initial State

engine.add_fact("server_load_high")

engine.add_fact("memory_usage_normal")

# Rule 1: IF server load is high AND no alert sent, THEN assert alert_sent

rule1 = Rule(

name="Trigger High Load Alert",

conditions=[

lambda wm: "server_load_high" in wm,

lambda wm: "alert_sent" not in wm

],

actions=[

lambda wm: wm.add("alert_sent"),

lambda wm: print("ACTION: Alert sent to system admin.")

]

)

# Rule 2: IF alert_sent is true, THEN assert scale_up_server

rule2 = Rule(

name="Auto-Scale Server",

conditions=[

lambda wm: "alert_sent" in wm

],

actions=[

lambda wm: wm.add("server_scaling_initiated"),

lambda wm: print("ACTION: Server auto-scaling initiated.")

]

)

engine.add_rule(rule1)

engine.add_rule(rule2)

# Execute the Inference Engine

print("Initial Working Memory:", engine.working_memory)

engine.run()

print("Final Working Memory:", engine.working_memory)

In this code, the ProductionSystem continually loops over the rules. If the conditions (Antecedent) are satisfied against the working_memory (Global Database), it executes the actions (Consequent), thereby modifying the working memory. The has_fired flag acts as a simple refractoriness conflict resolution strategy to prevent infinite loops.

Frequently Asked Questions (FAQs)

Q: What is the difference between forward chaining and backward chaining in production systems?

Forward chaining is a data-driven approach that starts with known facts and applies rules to deduce new information until a goal is reached. Backward chaining is a goal-driven approach that starts with a hypothesized conclusion and works backward to see if the facts support it.

Q: What is the RETE algorithm in the context of production systems?

The RETE algorithm is a highly efficient pattern-matching algorithm used by inference engines. Instead of iterating through all rules and facts sequentially (which is computationally expensive), RETE compiles the rule base into a tree-like network graph. It caches intermediate matches, meaning the engine only evaluates changes to the data rather than re-evaluating the entire working memory, drastically improving system performance.

Q: How do production systems differ from modern machine learning models?

Production systems rely on explicit, human-programmed rules (symbolic AI) to deduce conclusions. They are highly explainable but cannot learn on their own. Modern machine learning models (like deep neural networks) rely on statistical AI; they autonomously learn patterns from vast datasets without explicit rule programming, but often act as “black boxes” where decision pathways are difficult to explain.

Q: Can a production system resolve conflicting rules on its own?

Yes, but only if an engineer has implemented a conflict resolution strategy within the inference engine. Strategies like prioritization (assigning weights to rules), specificity (choosing the most detailed rule), or recency (acting on the newest data) allow the system to algorithmically determine which rule to fire when a conflict occurs.