How to Become an LLM Engineer: Skills & Roadmap

An LLM engineer is a specialized artificial intelligence professional focused on designing, training, optimizing, and deploying Large Language Models (LLMs). Their work involves orchestrating complex transformer architectures, implementing fine-tuning techniques, building Retrieval-Augmented Generation (RAG) pipelines, and ensuring scalable model inference in production environments.

The Evolution of the LLM Engineer

The rapid proliferation of generative artificial intelligence has catalyzed the creation of a highly specialized subset of machine learning: the LLM engineer. While traditional machine learning engineers primarily focus on training predictive models from scratch using tabular, image, or structured data, an LLM engineer operates at the intersection of natural language processing (NLP), distributed systems, and massive-scale deep learning.

This role demands an intimate understanding of foundational models like GPT, LLaMA, and Claude. Rather than building models from the ground up, an LLM engineer often begins with a base foundation model possessing billions of parameters and adapts it to solve complex, domain-specific enterprise problems. This adaptation requires navigating strict computational bottlenecks, managing GPU memory constraints, and implementing cutting-edge alignment techniques to ensure the model behaves predictably and safely in production. The transition from a generalized AI practitioner to an LLM engineer requires a fundamental shift in perspective—from focusing solely on model accuracy to balancing latency, throughput, context windows, and token economics.

Core Responsibilities of an LLM Engineer

The day-to-day operations of an LLM engineer are multifaceted, extending far beyond simply writing prompts or calling API endpoints. The role requires deep engineering rigor to integrate non-deterministic, autoregressive models into reliable software ecosystems. A senior LLM engineer must be capable of handling the complete lifecycle of a language model, from data curation to serving optimizations.

Data Curation and Pipeline Engineering

High-quality outputs require high-quality inputs. LLM engineers spend significant time architecting robust data pipelines to gather, clean, and format training data. For pre-training or continued pre-training, this involves deduplication, toxicity filtering, and implementing heuristic rules to remove low-quality text. For fine-tuning, the engineer must construct instruction-response pairs formatted consistently (e.g., using ChatML format). Managing data at this scale often necessitates proficiency with distributed processing frameworks like Apache Spark or Ray.

Model Fine-Tuning and Alignment

Adapting a base model to follow instructions or adopt a specific persona is a critical responsibility. LLM engineers execute Supervised Fine-Tuning (SFT) using high-quality human-annotated or synthetically generated datasets. Furthermore, they implement alignment techniques such as Reinforcement Learning from Human Feedback (RLHF) or Direct Preference Optimization (DPO). These methodologies adjust the model’s behavioral policy to minimize hallucinations, prevent toxic outputs, and improve the helpfulness of generated responses.

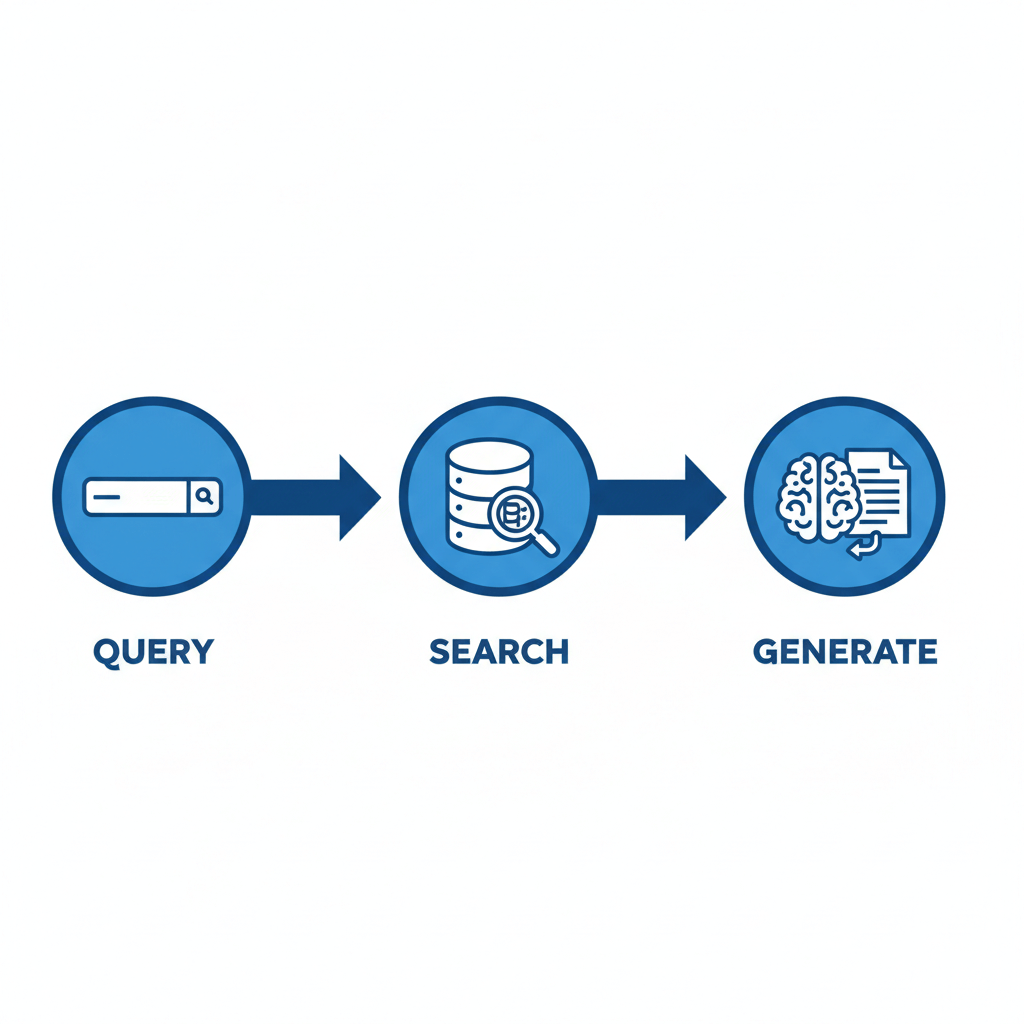

Developing Retrieval-Augmented Generation (RAG) Pipelines

Modern LLMs are constrained by their training cutoff dates and a tendency to hallucinate facts. LLM engineers solve this by designing RAG architectures. This involves generating dense vector representations (embeddings) of enterprise documents, storing them in specialized vector databases, and orchestrating retrieval mechanisms. When a user queries the system, the engineer’s pipeline performs a semantic search, injects the most relevant context into the LLM’s prompt window, and forces the model to generate an answer strictly grounded in the retrieved documents.

Evaluation and Benchmarking

Evaluating an LLM is inherently more complex than calculating precision or recall for a binary classifier. LLM engineers must establish rigorous, automated benchmarking suites. They employ traditional NLP metrics like ROUGE and BLEU, alongside modern, model-based evaluation paradigms such as “LLM-as-a-Judge,” where a superior model (like GPT-4) scores the outputs of a smaller, fine-tuned model based on relevance, coherence, and factual accuracy. They also utilize standardized benchmarks like MMLU (Massive Multitask Language Understanding) or HumanEval to track regression during model updates.

Inference Optimization and Deployment

Serving an LLM with billions of parameters is exceptionally compute-intensive. A primary responsibility of the LLM engineer is reducing inference latency and maximizing throughput (tokens generated per second). This requires deep knowledge of hardware utilization. Engineers implement techniques like KV-caching to avoid redundant calculations, model quantization to reduce memory footprints, and continuous batching to maximize GPU utilization in production servers using frameworks like vLLM or TensorRT-LLM.

Essential Skills and Technologies

Mastering LLM engineering requires an extensive toolkit. Professionals in this domain must bridge the gap between theoretical deep learning mathematics and low-level system optimization. The following skills form the technical foundation required to succeed.

Deep Learning Frameworks and Programming

Python is the undisputed language of AI, and absolute fluency is required. Beyond standard Python, an LLM engineer must be an expert in deep learning frameworks, specifically PyTorch. PyTorch is the backbone of modern LLM research and deployment. Engineers must understand how to construct custom neural network modules, manage distributed tensors across multiple GPUs, and debug gradient computations. Additionally, deep familiarity with the Hugging Face ecosystem—specifically the transformers, datasets, and accelerate libraries—is mandatory, as it serves as the industry standard for loading, modifying, and interacting with open-weight models.

Transformer Architecture Mechanics

Treating an LLM as a “black box” is insufficient for an engineer. A profound understanding of the Transformer architecture, introduced in the seminal “Attention Is All You Need” paper, is required. You must understand the mathematics of the scaled dot-product attention mechanism:

Attention(Q, K, V) = softmax((Q K^T) / √d_k) V

Engineers need to comprehend how queries (Q), keys (K), and values (V) interact to establish contextual relationships between tokens. Furthermore, understanding architectural variations is crucial. This includes differences between encoder-only models (BERT), encoder-decoder models (T5), and decoder-only autoregressive models (GPT, LLaMA). Knowledge of advanced positional encoding schemes, such as Rotary Position Embedding (RoPE) and ALiBi, is also expected, as these directly impact a model’s ability to extrapolate to longer context windows.

Parameter-Efficient Fine-Tuning (PEFT)

Updating all weights in a 70-billion parameter model requires massive compute clusters, which is often financially unviable. LLM engineers utilize Parameter-Efficient Fine-Tuning (PEFT) techniques to train models on consumer-grade hardware or smaller cloud instances.

The most prominent technique is Low-Rank Adaptation (LoRA). Instead of modifying the original dense weight matrix (W0), LoRA freezes W0 and injects trainable rank decomposition matrices (A and B) into the transformer layers.

W = W0 + ΔW = W0 + B A

Because the rank (r) is kept extremely small, the number of trainable parameters drops by orders of magnitude (often >98%), drastically reducing VRAM requirements while maintaining near-parity with full fine-tuning performance. Engineers must also be familiar with QLoRA, which quantizes the base model to 4-bit precision before applying LoRA adapters, pushing memory efficiency even further.

Retrieval-Augmented Generation (RAG) and Vector Databases

To build state-of-the-art enterprise AI, engineers must master RAG infrastructures. This requires a deep understanding of text embedding models, which map sentences into high-dimensional vector spaces. Engineers must know how to calculate vector distances using Cosine Similarity:

Cosine Similarity = (A · B) / (||A|| ||B||)

Beyond the mathematics, practical experience with Vector Databases such as Pinecone, Milvus, Qdrant, or FAISS is essential. An LLM engineer must also master advanced RAG techniques, such as semantic chunking, multi-query routing, hypothetical document embeddings (HyDE), and re-ranking algorithms (using cross-encoders) to maximize the precision of the retrieved context.

MLOps and Inference Optimization

Deploying LLMs is arguably the hardest part of the job. Engineers must navigate the “Memory Wall”—the bottleneck where moving data from GPU memory to compute cores takes longer than the computation itself.

To mitigate this, engineers must understand:

- Quantization: Reducing the precision of model weights from 16-bit floats (FP16/BF16) to 8-bit or 4-bit integers (INT8/INT4). Engineers should be familiar with algorithms like AWQ (Activation-aware Weight Quantization) and GPTQ, which minimize accuracy degradation during compression.

- KV Caching: Storing the Key and Value vectors of previously generated tokens in memory to avoid redundant computations during autoregressive generation.

- PagedAttention: An algorithm popularized by the vLLM framework that manages KV cache memory dynamically, similar to virtual memory paging in operating systems, significantly reducing memory fragmentation and allowing for larger batch sizes.

The Comprehensive LLM Engineer Roadmap

Transitioning into LLM engineering requires a structured learning path. Because the field evolves weekly, building a strong theoretical foundation is far more valuable than simply learning the syntax of today’s trendy AI wrapper libraries. The following phase-by-phase roadmap outlines the journey from novice to senior LLM engineer.

Phase 1: Foundational Mathematics and Programming

Before touching neural networks, you must solidify your mathematical prerequisites. Deep learning is essentially applied linear algebra and multivariable calculus optimized via probability theory.

- Linear Algebra: Understand vectors, matrices, dot products, matrix multiplication, and singular value decomposition (SVD). These concepts are the bedrock of embeddings and neural network layers.

- Calculus: Grasp partial derivatives and the chain rule. You must understand how gradients flow backward through a computational graph to update weights during training.

- Probability & Statistics: Learn about probability distributions, expected values, and cross-entropy. Language modeling is fundamentally the process of calculating a probability distribution over a vocabulary of tokens.

- Programming: Achieve advanced proficiency in Python. Learn to write clean, modular, object-oriented code. Master libraries like NumPy and Pandas for data manipulation.

Phase 2: Core Machine Learning and Deep Learning

Do not skip straight to Large Language Models. A robust understanding of classical machine learning and standard deep learning is crucial for debugging complex AI systems.

- Machine Learning Fundamentals: Understand the differences between supervised, unsupervised, and reinforcement learning. Learn about bias-variance tradeoff, overfitting, regularization, and cross-validation.

- Deep Learning Basics: Build multilayer perceptrons (MLPs) from scratch. Understand activation functions (ReLU, GELU, Sigmoid), loss functions, and optimization algorithms (Stochastic Gradient Descent, Adam, AdamW).

- PyTorch Proficiency: Transition from conceptual understanding to implementation using PyTorch. Learn to construct

nn.Moduleclasses, write custom training loops, and manage data loaders.

Phase 3: Natural Language Processing (NLP) Evolution

To appreciate the Transformer, you must understand the problems it solved. Trace the historical evolution of NLP architectures.

- Classical NLP: Learn about tokenization, stemming, lemmatization, and TF-IDF.

- Word Embeddings: Study Word2Vec and GloVe. Understand how representing words as dense vectors captures semantic relationships.

- Sequential Models: Study Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks. Understand the vanishing gradient problem and the limitations of sequential processing for long texts.

- The Transformer: Read and dissect the “Attention Is All You Need” paper. Code a multi-head attention block from scratch. Understand how transformers allow for parallel processing of input sequences, entirely bypassing the limitations of RNNs.

Phase 4: Advanced LLM Techniques and Application

With the foundational knowledge secured, shift your focus exclusively to modern Large Language Models and their application layer.

- Prompt Engineering & Orchestration: Learn advanced prompting techniques (Few-Shot, Chain-of-Thought, Tree of Thoughts). Build agentic workflows using frameworks like LangChain or LlamaIndex, where LLMs can route tasks, use external APIs, and execute code.

- Fine-Tuning: Implement Supervised Fine-Tuning (SFT) using the Hugging Face

TRL(Transformer Reinforcement Learning) library. Master PEFT methodologies, specifically LoRA and QLoRA. - RAG Architecture: Build comprehensive RAG pipelines from scratch. Integrate vector databases, implement chunking strategies, and optimize retrieval using BM25 hybrid search and Cohere-style cross-encoder re-rankers.

- Alignment: Study the mechanics of RLHF. Understand how to train a Reward Model to score LLM outputs, and how Proximal Policy Optimization (PPO) is used to update the model. Subsequently, study DPO (Direct Preference Optimization) as a modern, mathematically elegant alternative to RLHF that bypasses the reward model entirely.

Phase 5: Production, MLOps, and Deployment

The final phase distinguishes junior developers from senior LLM engineers. It focuses on scalability, latency, and system architecture.

- Serving Frameworks: Deploy models using high-performance serving engines like vLLM, Text Generation Inference (TGI), or NVIDIA Triton Inference Server.

- Optimization Techniques: Experiment with Post-Training Quantization (PTQ) formats like GGUF, AWQ, and ExLlamaV2. Understand the tradeoffs between perplexity degradation and inference speed.

- Observability: Implement telemetry to monitor LLM performance in production. Track metrics such as Time to First Token (TTFT), Inter-Token Latency, and GPU memory utilization. Build safeguards against prompt injection and jailbreak attacks.

Comparison: LLM Engineer vs. Traditional Machine Learning Engineer

While there is overlap, the day-to-day realities, constraints, and methodologies of an LLM Engineer differ significantly from those of a traditional Machine Learning Engineer. The table below highlights the primary distinctions.

| Feature/Domain | LLM Engineer | Traditional ML Engineer |

|---|---|---|

| Primary Focus | Adapting, fine-tuning, and serving massive pre-trained transformer models. | Training predictive models (classification, regression) from scratch on structured data. |

| Model Scale | Billions of parameters (e.g., 8B to 100B+ parameters). Heavy focus on memory constraints. | Millions of parameters (e.g., Random Forests, XGBoost, standard CNNs). |

| Compute Requirements | Multi-GPU clusters (A100s, H100s). Distributed training and inference are mandatory. | Often trainable on a single GPU or even powerful CPUs. |

| Data Modality | Vast corpora of unstructured text, code, and conversational data. | Structured tabular data, time-series data, or structured image datasets. |

| Core Techniques | RAG, LoRA, QLoRA, RLHF, DPO, KV Caching, Continuous Batching. | Feature engineering, cross-validation, hyperparameter tuning, SMOTE. |

| Evaluation Metrics | Perplexity, ROUGE, LLM-as-a-Judge, MMLU, HumanEval. | Accuracy, F1-Score, Precision, Recall, [AUC-ROC](https://www.appliedaicourse.com/blog/auc-roc-curve/), RMSE. |

Challenges and Future Directions in LLM Engineering

The field of LLM engineering is nascent, meaning engineers operate at the bleeding edge of computer science. This presents several unique, ongoing challenges that engineers must constantly navigate and solve.

Managing Context Limits and Memory Bottlenecks

As enterprise use cases demand larger context windows—allowing users to upload entire codebases or hundreds of PDF documents in a single prompt—engineers face quadratic scaling issues. Standard self-attention mechanism compute and memory requirements scale quadratically (O(N^2)) with sequence length (N). While techniques like Ring Attention and architectures like Mamba (State Space Models) attempt to mitigate this, managing KV cache memory exhaustion for 100k+ token contexts remains a severe engineering bottleneck.

Mitigating Hallucinations and Ensuring Grounding

Despite immense advancements, LLMs remain probabilistic pattern matchers. They do not possess intrinsic factual knowledge, making them prone to hallucinations—generating highly plausible but entirely false information. LLM engineers must constantly refine RAG pipelines, implement rigorous citation constraints via prompt engineering, and utilize self-correction loops where an agent reviews its own output before returning it to the user. Ensuring absolute deterministic grounding in highly regulated industries (like healthcare or finance) remains a paramount challenge.

Compute Constraints and Economic Viability

Running massive AI infrastructure is prohibitively expensive. An LLM engineer is often tasked with the “Tokenomics” of a project. They must evaluate whether routing a query to an expensive proprietary API (like GPT-4) is necessary, or if a highly quantized, task-specific, fine-tuned open-source model (like LLaMA-3 8B) running on cheaper, local hardware can achieve the same accuracy. Balancing the tradeoff between model capability, infrastructure cost, and user latency is a daily architectural challenge.

Security and Prompt Injection

As LLMs are granted access to external tools, databases, and APIs via agentic frameworks, security risks compound exponentially. Prompt injection—where a malicious user crafts a prompt designed to overwrite the system’s instructions and force the AI to leak sensitive data or execute unauthorized commands—is a critical vulnerability. LLM engineers must build robust input sanitation layers, utilize “guardrail” models to classify and reject malicious intent, and ensure strict principle-of-least-privilege access when binding LLMs to enterprise APIs.

Frequently Asked Questions (FAQ)

Do I need a Ph.D. to become an LLM engineer?

No, a Ph.D. is not strictly necessary for an applied LLM engineering role. While AI research scientist roles (creating novel architectures) heavily favor Ph.D. candidates, LLM engineers focus on applied AI—building infrastructure, fine-tuning, and deployment. A strong background in software engineering, a deep understanding of PyTorch, and a proven portfolio of complex AI projects are significantly more important than academic credentials.

What is the difference between an AI Engineer and an LLM Engineer?

An AI Engineer is a broader title that encompasses building computer vision systems, predictive models, robotics algorithms, and speech recognition tools. An LLM Engineer is a highly specialized subset of AI engineering focused exclusively on text-based (and multimodal) generative transformer models, dealing heavily with NLP, tokenization, and large-scale distributed inference.

How important are Data Structures and Algorithms (DSA) for this role?

Very important. While you spend much of your time working with neural networks, building data pipelines, handling vector embeddings, and optimizing low-level memory usage for deployment all require a strong grasp of algorithmic complexity. Efficient chunking strategies, vector search indexing (like HNSW), and optimizing Python bottlenecks all rely on solid DSA fundamentals.

What programming language is most important for LLM development?

Python is undeniably the primary language for AI and LLM development, given the dominance of PyTorch and the Hugging Face ecosystem. However, for senior roles focused on high-performance deployment and inference optimization, knowledge of C++ or Rust, along with CUDA programming for direct GPU communication, is highly advantageous.