Expectation-Maximization (EM) Algorithm in ML Explained

The EM algorithm in machine learning is an iterative mathematical framework used to find maximum likelihood estimates of parameters in statistical models containing unobserved latent variables. It alternates between an expectation (E) step, which estimates missing data, and a maximization (M) step, which optimizes the model parameters based on those estimates.

The Problem of Latent Variables and Maximum Likelihood

To fully grasp the necessity of the Expectation-Maximization algorithm, one must first understand the fundamental objective of parametric machine learning models: finding the optimal set of parameters that best describe a given dataset. This is universally achieved using Maximum Likelihood Estimation (MLE). In standard MLE, we assume that all variables in our dataset are observable. By taking the derivative of the log-likelihood function with respect to the model parameters and setting it to zero, we can analytically or numerically solve for the parameter values that maximize the probability of observing our training data.

However, real-world data is rarely pristine or fully observable. In many complex modeling scenarios, the data is incomplete, or the underlying data distribution is driven by hidden, unobservable factors known as latent variables. When latent variables are introduced into a probabilistic model, the standard Maximum Likelihood Estimation approach fails. To calculate the likelihood of the observed data, one must marginalize (integrate or sum) over all possible states of the hidden variables. This creates a log-likelihood function that contains a sum inside the logarithm, rendering the derivative highly complex and computationally intractable. We cannot optimize the parameters because we do not know the hidden states, and we cannot determine the hidden states because we do not have the optimal parameters. This chicken-and-egg problem is precisely what the EM algorithm resolves.

Maximum Likelihood Estimation (MLE) Limitations

Given a dataset X and model parameters θ, standard MLE attempts to maximize the log-likelihood function:

L(θ) = log P(X | θ)

When a latent variable Z is introduced, the true probability distribution depends on both X and Z. The incomplete-data log-likelihood becomes:

L(θ) = log ( Σ_Z P(X, Z | θ) )

The presence of the summation (Σ) inside the natural logarithm prevents the log function from directly applying to the probability density components. This mathematical roadblock prevents a closed-form solution, forcing data scientists to rely on iterative approximation techniques.

What is the Expectation-Maximization (EM) Algorithm?

The Expectation-Maximization algorithm, formalized in a seminal 1977 paper by Arthur Dempster, Nan Laird, and Donald Rubin, is an elegant iterative optimization technique designed to bypass the intractable marginalization problem of latent variables. Rather than attempting to maximize the complex incomplete-data log-likelihood directly, the EM algorithm constructs a simplified lower bound function and maximizes that bound instead.

At its core, the algorithm operates on a simple philosophy: if we knew the exact values of the hidden variables, estimating the model parameters would be a trivial MLE problem. Conversely, if we knew the exact model parameters, estimating the distribution of the hidden variables would be straightforward. The EM algorithm leverages this duality by systematically alternating between two distinct phases. It first guesses the probability distribution of the hidden variables using the current parameter estimates. Then, it uses this approximated complete dataset to recalculate and update the parameters. This cyclical process is guaranteed to never decrease the likelihood of the model, systematically hill-climbing the probability landscape until it reaches a local maximum.

Core Architecture: How the EM Algorithm Works

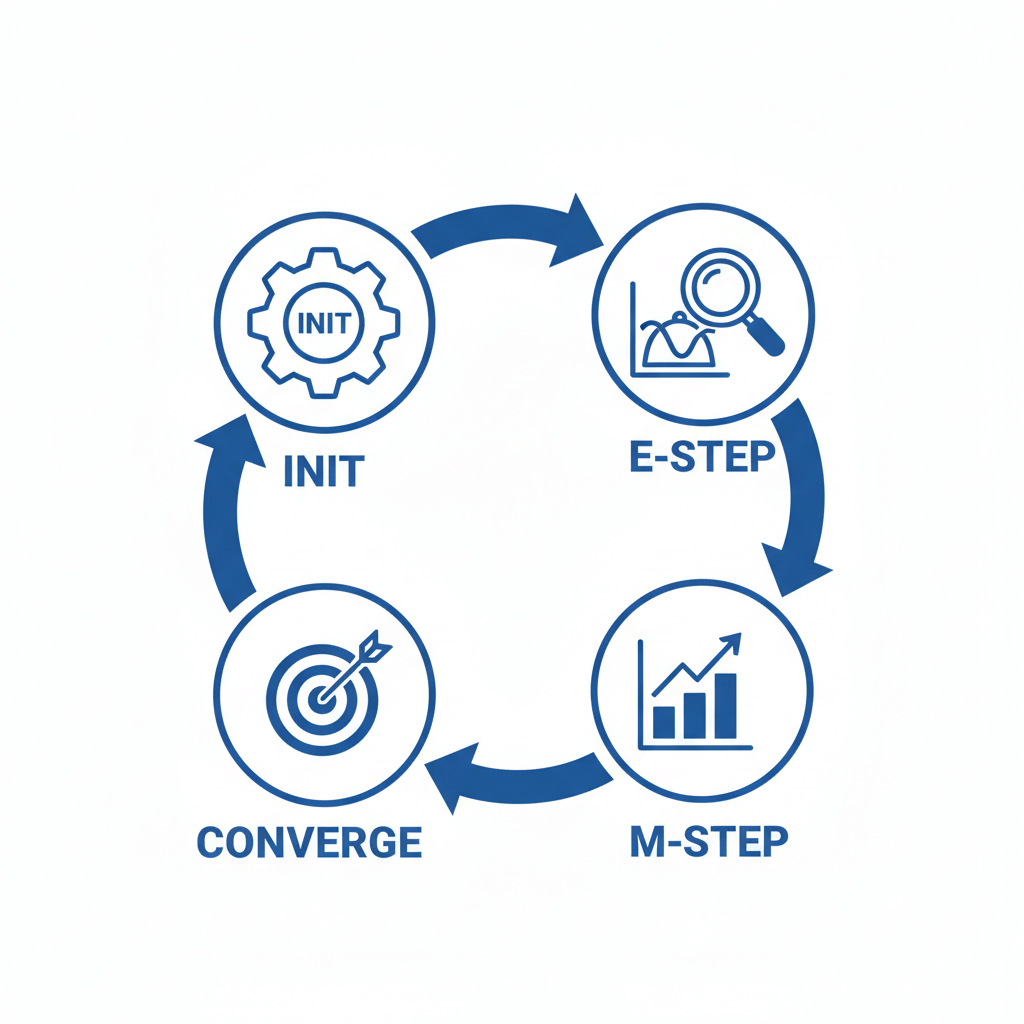

The Expectation-Maximization algorithm follows a rigid, four-step architectural flow: Initialization, the Expectation Step (E-Step), the Maximization Step (M-Step), and Convergence evaluation. Understanding the strict mathematical operations executed at each step is crucial for implementing custom probabilistic models.

1. Parameter Initialization

Before the algorithm can begin its iterative cycle, it requires a starting point. The initial parameters, denoted as θ0, are assigned starting values. Depending on the model, these values can be randomly generated from a uniform or normal distribution, or they can be derived using simpler, deterministic algorithms. For example, in clustering applications, it is highly common to run the K-Means algorithm first and use its output centroids to initialize the EM algorithm. Poor initialization can lead the algorithm to converge on a suboptimal local maximum, making the choice of θ0 a critical hyperparameter in practice.

2. The Expectation Step (E-Step)

The E-Step is responsible for estimating the missing data or latent variables based on the observed data X and the current parameter estimates θt. However, instead of assigning hard values to the latent variables, the E-Step computes the posterior probability distribution of the latent variables given the observed variables X and the current parameters θt:

Q(θ | θt) = E{Z|X, θ_t} [ log P(X, Z | θ) ]

In simpler terms, the algorithm calculates the expected log-likelihood of the complete dataset by weighting the possible outcomes of the hidden variables according to their current probability of occurring.

3. The Maximization Step (M-Step)

Once the E-Step has constructed the expected complete-data log-likelihood function Q(θ | θt), the M-Step treats this function as if it were standard, fully observable data. The objective of the M-Step is to find a new set of parameters, θ{t+1}, that strictly maximizes the Q function.

θ{t+1} = argmaxθ Q(θ | θ_t)

Because the Q function represents an expected complete dataset, the summation or integration resides outside of the logarithm, allowing the derivatives to be computed algebraically. The new parameters computed in this step are guaranteed to provide a better (or equal) fit to the observed data than the previous parameters θ_t.

4. Convergence Check

The algorithm evaluates whether the model has reached a stable state. This is determined by comparing the new log-likelihood to the previous log-likelihood. If the difference between L(θ{t+1}) and L(θt) is less than a strictly defined, infinitesimally small threshold (ε), the algorithm is deemed to have converged, and the execution halts. If the threshold is not met, the algorithm feeds θ_{t+1} back into the E-Step and repeats the cycle.

Mathematical Foundation and Proof of Convergence

One of the defining characteristics of the EM algorithm—and the reason it is highly respected in statistical machine learning—is its ironclad mathematical guarantee of monotonic convergence. Every iteration of the algorithm is mathematically proven to either increase the observed data log-likelihood or keep it the same; it will never degrade the model’s performance. Understanding why this happens requires looking into Jensen’s Inequality and Kullback-Leibler (KL) Divergence.

To maximize the incomplete data log-likelihood L(θ) = log P(X | θ), we can introduce an arbitrary probability distribution q(Z) over the latent variables. Through algebraic manipulation, the log-likelihood can be decomposed into two distinct components: an Evidence Lower Bound (ELBO) and the KL Divergence.

L(θ) = ELBO(q, θ) + D_KL ( q(Z) || P(Z | X, θ) )

The KL Divergence measures the difference between our arbitrary distribution q(Z) and the true posterior distribution of the latent variables P(Z | X, θ). By definition, KL Divergence is always non-negative (≥ 0). Therefore, the ELBO function serves as a strict mathematical lower bound to the true log-likelihood.

In the E-Step, the algorithm sets the distribution q(Z) to be exactly equal to the current posterior distribution P(Z | X, θ_t). When this happens, the KL Divergence becomes exactly zero. Consequently, the lower bound (ELBO) touches the true log-likelihood curve at the current parameter estimate.

In the M-Step, the algorithm maximizes the ELBO with respect to the parameters θ. Because the ELBO is a lower bound, and because it touched the true likelihood curve at the start of the step, any parameter update that pushes the ELBO higher must also push the true log-likelihood higher. This maximization-maximization mechanism guarantees that the likelihood increases monotonically, ensuring convergence to a stationary point (usually a local maximum).

Implementing EM: Gaussian Mixture Models (GMM)

The most prominent and universally taught application of the Expectation-Maximization algorithm is the Gaussian Mixture Model (GMM). A GMM is an unsupervised probabilistic clustering model that assumes all data points are generated from a mixture of a finite number of Gaussian (normal) distributions, each with its own unknown parameters.

While the K-Means algorithm performs “hard clustering” (where every data point is strictly assigned to exactly one cluster), GMMs utilize “soft clustering.” In a GMM, a data point does not belong to just one cluster; instead, it holds a probability of belonging to every cluster in the model. This makes GMMs incredibly powerful for datasets with overlapping clusters or elliptical distributions, where K-Means would critically fail. In a GMM, the cluster assignments for each data point act as the unobserved latent variables (Z), making the EM algorithm the perfect tool for optimization.

GMM Parameters

A Gaussian Mixture Model with K clusters relies on three parameters, collectively representing θ:

- πk (Mixing Coefficients): The prior probability that a data point comes from cluster k. The sum of all πk must equal 1.

- μ_k (Means): The center coordinate of the k-th Gaussian distribution.

- Σ_k (Covariance Matrices): The matrix defining the shape, variance, and orientation of the k-th Gaussian distribution.

Applying EM to GMM

The execution of the EM algorithm for a Gaussian Mixture Model unfolds as follows:

1. Initialization:

Select an initial set of parameters: π, μ, and Σ. Typically, K-Means is run to determine the initial μ, the covariance of the clusters becomes Σ, and π is set uniformly.

2. E-Step (Calculating Responsibilities):

For every data point xi and every cluster k, calculate the “responsibility” γik. This represents the posterior probability that point x_i was generated by cluster k.

γik = ( πk * N(xi | μk, Σk) ) / ( Σ{j=1 to K} πj * N(xi | μj, Σj) )

Where N is the multivariate Gaussian probability density function.

3. M-Step (Updating Parameters):

Using the responsibilities calculated in the E-Step, update the parameters to maximize the expected likelihood.

- Calculate the effective number of points assigned to cluster k:

Nk = Σ{i=1 to N} γ_ik - Update the Mixing Coefficients:

πk = Nk / N - Update the Means:

μk = ( 1 / Nk ) * Σ{i=1 to N} ( γik * x_i ) - Update the Covariance Matrices:

Σk = ( 1 / Nk ) * Σ{i=1 to N} ( γik * (xi – μk) * (xi – μk)^T )

4. Convergence:

Calculate the log-likelihood using the new parameters. If the log-likelihood has barely changed, terminate. Otherwise, repeat the E-Step.

Python Code Implementation

Below is a simplified, highly structured Python implementation of the EM algorithm applied to a 1-dimensional Gaussian Mixture Model, avoiding complex libraries to illustrate the underlying mathematics.

import numpy as np

from scipy.stats import norm

class GaussianMixture1D:

def __init__(self, k_clusters=2, max_iterations=100, tolerance=1e-4):

self.k = k_clusters

self.max_iter = max_iterations

self.tol = tolerance

def fit(self, X):

n_samples = X.shape[0]

# 1. Initialization

self.weights = np.ones(self.k) / self.k

self.means = np.random.choice(X, self.k)

self.variances = np.random.random_sample(self.k)

log_likelihood_old = 0

for iteration in range(self.max_iter):

# 2. E-Step: Calculate responsibilities

responsibilities = np.zeros((n_samples, self.k))

for j in range(self.k):

responsibilities[:, j] = self.weights[j] * norm.pdf(X, self.means[j], np.sqrt(self.variances[j]))

# Normalize responsibilities across clusters

row_sums = responsibilities.sum(axis=1)[:, np.newaxis]

responsibilities = responsibilities / row_sums

# 3. M-Step: Update parameters

N_k = responsibilities.sum(axis=0)

for j in range(self.k):

# Update weights

self.weights[j] = N_k[j] / n_samples

# Update means

self.means[j] = np.sum(responsibilities[:, j] * X) / N_k[j]

# Update variances

diff = X - self.means[j]

self.variances[j] = np.sum(responsibilities[:, j] * (diff ** 2)) / N_k[j]

# 4. Convergence Check

log_likelihood_new = np.sum(np.log(np.sum(responsibilities, axis=1)))

if np.abs(log_likelihood_new - log_likelihood_old) < self.tol:

print(f"Converged at iteration {iteration}")

break

log_likelihood_old = log_likelihood_new

# Example Usage

if __name__ == "__main__":

# Generate synthetic 1D data combining two distributions

np.random.seed(42)

data = np.concatenate([np.random.normal(0, 1, 300), np.random.normal(5, 1.5, 700)])

gmm = GaussianMixture1D(k_clusters=2)

gmm.fit(data)

print("Estimated Means:", gmm.means)

Applications of the EM Algorithm in Machine Learning

The Expectation-Maximization algorithm is not a singular model; rather, it is a versatile meta-algorithm that forms the optimization backbone for numerous machine learning architectures. Its ability to navigate probabilistic spaces makes it indispensable across various domains of artificial intelligence and data science.

- Hidden Markov Models (HMMs): In sequential data processing such as speech recognition and time-series forecasting, Hidden Markov Models assume that the system is a Markov process governed by unobserved states. The Baum-Welch algorithm, which is a specialized variant of the EM algorithm, is utilized to find the unknown transition and emission probabilities of the HMM.

- Missing Data Imputation: When datasets are plagued with missing values (NaNs), dropping rows can result in critical information loss. The EM algorithm is frequently used to estimate the underlying distribution of the dataset and probabilistically infer (impute) the missing values, preserving the covariance structures better than simple mean or median imputation.

- Natural Language Processing (NLP): In topic modeling, the Latent Dirichlet Allocation (LDA) algorithm attempts to discover hidden semantic structures within massive collections of documents. EM variants, specifically Variational EM, are deployed to estimate the document-topic distributions and topic-word distributions, as the actual topics are latent variables.

- Medical Image Reconstruction: In Positron Emission Tomography (PET) and Computed Tomography (CT) scans, the images must be reconstructed from noisy sensor data. The actual origin points of radioactive decay are unobservable. The EM algorithm is rigorously applied to iteratively reconstruct high-resolution images by estimating the hidden source of the emissions.

Advantages and Disadvantages of EM Algorithm

While the EM algorithm is mathematically elegant and provides robust solutions for latent variable models, it is inherently an optimization heuristic and comes with specific computational trade-offs. Engineers must carefully weigh these pros and cons before deploying EM-based models in production environments.

| Criteria | Advantages | Disadvantages |

|---|---|---|

| Convergence Guarantee | Mathematically guaranteed to increase or maintain the model’s likelihood on every single iteration, ensuring stable execution without divergence. | Only guaranteed to converge to a local maximum, not the global maximum. It is highly sensitive to the initial parameter configuration. |

| Implementation Complexity | The E-step and M-step can often be expressed in highly analytical, closed-form equations, making it straightforward to code for models like GMMs. | Deriving the specific mathematical E and M formulas for novel, custom probabilistic distributions can be intensely mathematically demanding. |

| Handling Incomplete Data | Exceptionally powerful for unsupervised learning and situations with heavily missing data, extracting underlying patterns seamlessly. | The algorithm can suffer from exceedingly slow convergence rates, particularly when the amount of missing or latent data is exceptionally large compared to the observed data. |

| Memory and Compute | Does not require computing the Hessian matrix (second-order derivatives), saving significant memory overhead compared to Newton-Raphson methods. | Each iteration requires a full pass over the entire dataset to compute posterior probabilities, which becomes computationally expensive for massive, high-dimensional data. |

Variants of the Expectation-Maximization Algorithm

As datasets have grown in scale and statistical models have increased in complexity, the traditional EM algorithm has exposed certain limitations, particularly regarding execution speed and intractable integrals. To address these bottlenecks, researchers have engineered several advanced variants.

Generalized EM (GEM)

In highly complex models, executing the strict M-Step—finding the absolute maximum parameter set—can be mathematically impossible or computationally prohibitive. The Generalized Expectation-Maximization (GEM) algorithm relaxes this constraint. Instead of requiring the M-Step to find the maximum parameters, GEM only requires that the new parameters produce a higher Q-function value than the previous parameters. By simply improving the parameters rather than fully maximizing them, GEM significantly accelerates each iteration while still retaining the overall guarantee of convergence.

Stochastic EM (SEM)

When the latent variable space is extremely vast, calculating the exact expected value during the E-Step becomes computationally infeasible. The Stochastic EM algorithm replaces the exact E-Step with a Monte Carlo sampling technique. It randomly draws samples from the posterior distribution of the hidden variables to approximate the expected log-likelihood. While this introduces randomness and prevents strictly monotonic convergence, it allows the algorithm to escape local maxima and scale to models with intractable posteriors.

Online / Incremental EM

The standard EM algorithm is a batch-processing technique; it requires loading the entire dataset into memory to perform a single update. This is impossible for streaming data architectures or massive petabyte-scale datasets. Online EM algorithms continuously update the parameters incrementally as new data points (or mini-batches) arrive. By utilizing stochastic gradient approximations, Online EM heavily reduces memory constraints and allows models to adapt dynamically in real-time environments.

Frequently Asked Questions (FAQs)

Does the EM algorithm always find the best possible solution?

No. The Expectation-Maximization algorithm is strictly guaranteed to converge to a local maximum or a saddle point, not necessarily the global maximum. Because the likelihood surface of latent variable models is highly non-convex, the algorithm’s final output heavily depends on the initialization parameters. It is common practice to run the EM algorithm multiple times with different random seeds and select the model that yields the highest overall likelihood.

What is the difference between K-Means and the EM Algorithm?

K-Means is essentially a simplified, specialized case of the EM algorithm applied to Gaussian Mixture Models. K-Means performs “hard assignment” (a point belongs to exactly one cluster) and assumes all clusters share the same spherical shape and volume (identical, isotropic covariance). The standard EM applied to GMMs performs “soft assignment” (probabilistic weighting) and can adapt to elliptical cluster shapes and varying variances.

Why is Jensen’s Inequality important in the EM algorithm?

Jensen’s Inequality is the foundational mathematical theorem that allows the EM algorithm to construct its Evidence Lower Bound (ELBO). It states that for any strictly concave function, such as a logarithm, the log of an expected value is greater than or equal to the expected value of the log. This property is what mathematically proves that maximizing the surrogate Q-function in the M-Step strictly guarantees an increase in the true data log-likelihood.

Can the EM Algorithm be used for Supervised Learning?

While EM is predominantly recognized as an unsupervised learning technique (due to its association with clustering and latent variables), it can also be utilized in supervised and semi-supervised learning scenarios. In semi-supervised learning, EM can treat the labels of the unlabeled data as the latent variables, iteratively guessing their classes and updating the decision boundaries to leverage vast amounts of unlabeled data alongside smaller, labeled datasets.